The Devtest labs is a fantastic tool to quickly build environments for development & test purposes and for a classroom. It offers great tools to restrict the users without removing all their freedom. It will speed up the boarding, with its claimable VMs that are already created and are waiting for the user. Formulas will help ensure you that you always get the latest version of your artifact installed on those VMs. And finally, the auto-shutdown will keep your money where it should stay...in your pocket.

In this post, I will show you how to deploy an Azure Devtest Lab with an Azure Resource Manager (ARM) template, and create the claimable VMs based on your formulas in one shot.

Step 1 - The ARM template

First, we need an ARM template. You can start from scratch of course, but it may be a lot of work if you are just getting started. You can also pick one from GiHub and customize it.

What I recommended, is to create a simple Azure Devtest Lab directly from the Azure portal. Once your lab is created, go in the Automation script option of the resourcegroup and copy/paste the ARM template in your favorite text editor.

Now you must clean it. If you don't already know it, use the 5 Simple Steps to Get a Clean ARM Template method, it an excellent way to get started.

Once the template is clean we need to add a few things that didn't follow during the export. Usually, in an ARM template, you get one list named

resources. However, a Devtest Lab also contains a list named resources but it's probably missing.{

"parameters": {},

"variables": {},

"resources": [],

}virtualnetworks. It's also a good idea to add a schedules and a notificationChannels. Those two will be used to shut down automatically all the VMs and to send a notification to the user just before.{

"$schema": "https://schema.management.azure.com/schemas/2015-01-01/deploymentTemplate.json#",

"contentVersion": "1.0.0.0",

"parameters": {

...

},

"variables": {

...

},

"resources": [

{

"type": "Microsoft.DevTestLab/labs",

"name": "[variables('LabName')]",

"apiVersion": "2016-05-15",

"location": "[resourceGroup().location]",

"resources": [

{

"apiVersion": "2017-04-26-preview",

"name": "[variables('virtualNetworksName')]",

"type": "virtualnetworks",

"dependsOn": [

"[resourceId('microsoft.devtestlab/labs', variables('LabName'))]"

]

},

{

"apiVersion": "2017-04-26-preview",

"name": "LabVmsShutdown",

"type": "schedules",

"dependsOn": [

"[resourceId('Microsoft.DevTestLab/labs', variables('LabName'))]"

],

"properties": {

"status": "Enabled",

"timeZoneId": "Eastern Standard Time",

"dailyRecurrence": {

"time": "[variables('ShutdowTime')]"

},

"taskType": "LabVmsShutdownTask",

"notificationSettings": {

"status": "Enabled",

"timeInMinutes": 30

}

}

},

{

"apiVersion": "2017-04-26-preview",

"name": "AutoShutdown",

"type": "notificationChannels",

"properties": {

"description": "This option will send notifications to the specified webhook URL before auto-shutdown of virtual machines occurs.",

"events": [

{

"eventName": "Autoshutdown"

}

],

"emailRecipient": "[variables('emailRecipient')]"

},

"dependsOn": [

"[resourceId('Microsoft.DevTestLab/labs', variables('LabName'))]"

]

}

],

"dependsOn": []

}

...Step 2 - The Formulas

Now that the Devtest lab is well defined, it's time to add our formulas. If you had created some already from the portal, don't look for them in the template. At the moment, export won't script the formulas.

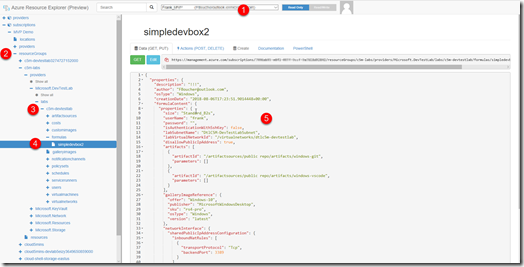

A quick way to get the JSON of your formulas is to create them from the portal and then use Azure Resources Explorer to get the code.

In a web browser, navigate to https://resources.azure.com, to open your Resource Explorer. Select the subscription, resource group, and lab that you are working on. In the node Formulas (4) you should see your formulas, click one and let's bring that JSON into our ARM template. Copy-paste it at the Resource level (the prime one, not the one inside the Lab).

Step 2.5 - The Azure KeyVault

You shouldn't put any password inside your ARM template, however, having them pre-define inside the formulas is pretty convenient. One solution is to use an Azure KeyVault.

Let's assume the KeyVault already exists, I will explain how to create it later. In your parameter file, add a parameter named

adminPassword and let's reference the KeyVault. We also need to specify the secret we want to use. In this case, we will put the password in a secret named vmPassword. "adminPassword": {

"reference": {

"keyVault": {

"id": "/subscriptions/{xxxxxxxx-xxxx-xxxx-xxxx-xxxxxxxxxxxx}/resourceGroups/cloud5mins/providers/Microsoft.KeyVault/vaults/Cloud5minsVault"

},

"secretName": "vmPassword"

}

}Step 3 - The ARM Claimable VMs

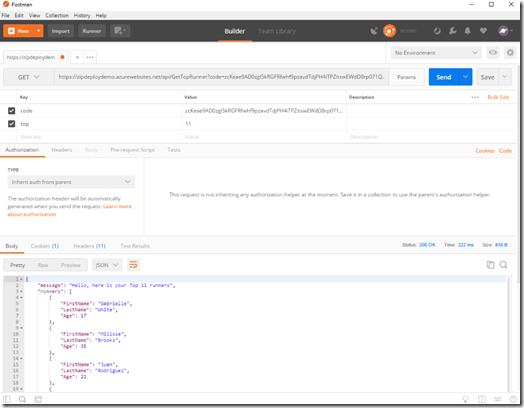

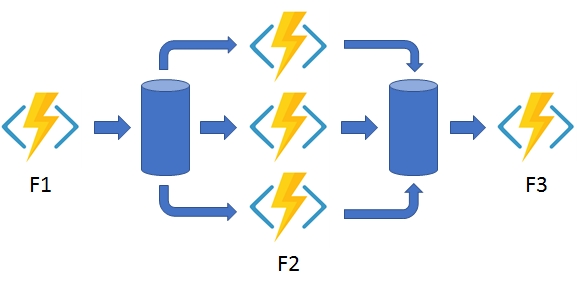

Now we have a Lab and the formulas, the only thing missing is the claimable VM based on the formulas. It's impossible to create in one ARM template both formulas and VMs. The alternative is to use a script that will create our VMs just after the deployment.

az group deployment create --name test-1 --resource-group cloud5mins --template-file DevTest.json --parameters DevTest.parameters.json --verbose

az lab vm create --lab-name C5M-DevTestLab -g cloud5mins --name FrankDevBox --formula SimpleDevBox Here a part of a script that will create if it doesn't exist a KeyVault and populate it. Then it will deploy our ARM template and finally, create our claimable VM. You can find all the code on my GitHub project: Azure-Devtest-Lab-efficient-deployment-sample.

[...]

# Checking for a KeyVault

searchKeyVault=$(az keyvault list -g $resourceGroupName --query "[?name=='$keyvaultName'].name" -o tsv )

lenResult=${#searchKeyVault}

if [ ! $lenResult -gt 0 ] ;then

echo "---> Creating keyvault: " $keyvaultName

az keyvault create --name $keyvaultName --resource-group $resourceGroupName --location $resourceGroupLocation --enabled-for-template-deployment true

else

echo "---> The Keyvaul $keyvaultName already exists"

fi

echo "---> Populating KeyVault..."

az keyvault secret set --vault-name $keyvaultName --name 'vmPassword' --value 'cr@zySheep42!'

# Deploy the DevTest Lab

echo "---> Deploying..."

az group deployment create --name $deploymentName --resource-group $resourceGroupName --template-file $templateFilePath --parameters $parameterFilePath --verbose

# Create the VMs using the formula created in the deployment

labName=$(az resource list -g cloud5mins --resource-type "Microsoft.DevTestLab/labs" --query [*].[name] --output tsv)

formulaName=$(az lab formula list -g $resourceGroupName --lab-name $labName --query [*].[name] --output tsv)

echo "---> Creating VM(s)..."

az lab vm create --lab-name $labName -g $resourceGroupName --name FrankSDevBox --formula $formulaName

echo "---> done <--- code="">In a video, please!

I also have a video of this post if you prefer.

Conclusion

Would it be for developing, testing, or training, as soon as you are creating environments in Azure, the DevTest Labs are definitely a must. It's a very powerful tool that not enough people know. Give it a try and let me know what do you do with the Azure DevTest Lab?

References:

- Azure-Devtest-Lab-efficient-deployment-sample: https://github.com/FBoucher/Azure-Devtest-Lab-efficient-deployment-sample

- An Overview of Azure DevTest Labs: https://www.youtube.com/watch?v=caO7AzOUxhQ

- Best practices Using Azure Resource Manager (ARM) Templates: https://www.youtube.com/watch?v=myYTGsONrn0&t=7s

- 5 Simple Steps to Get a Clean ARM Template: http://www.frankysnotes.com/2018/05/5-simple-steps-to-get-clean-arm-template.html

~

Cloud

Cloud Vaporized: Solid Strategies for Success in a Dematerialized World

Vaporized: Solid Strategies for Success in a Dematerialized World

Suggestion of the week

Suggestion of the week

Cloud

Cloud

We all know it, a story is the element that will give that little plus to our post, and video. This short book explains how to really make an effective one talking about the not visual things...

We all know it, a story is the element that will give that little plus to our post, and video. This short book explains how to really make an effective one talking about the not visual things...

Cloud

Cloud Exactly What to Say, The Magic Words for Influence and Impact

Exactly What to Say, The Magic Words for Influence and Impact Cloud

Cloud

Cloud

Cloud